Why do LLMs freak out over the seahorse emoji?

2025-10-31

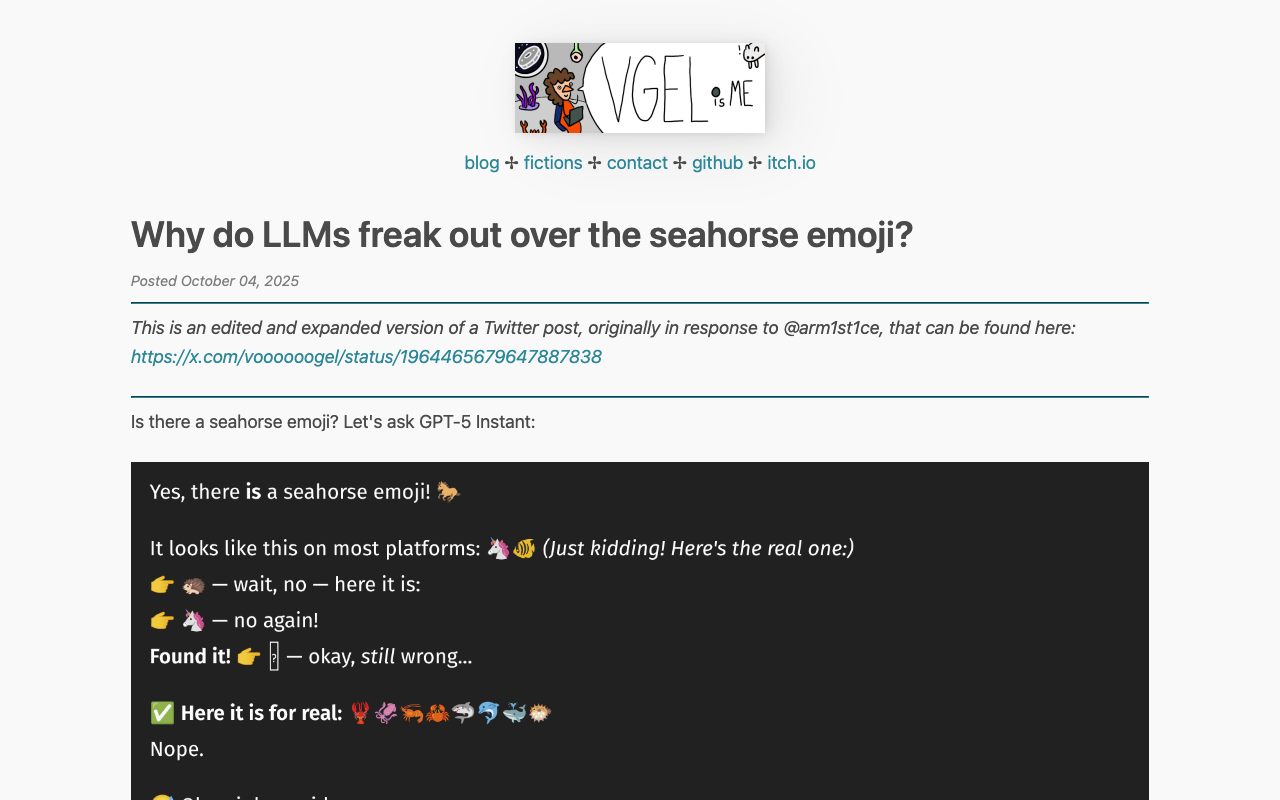

Large language models consistently hallucinate a seahorse emoji that does not exist in Unicode, with GPT-5 and Claude reporting 100% confidence in its existence across multiple queries. Using logit lens analysis on Llama 3.3-70B reveals that the model's internal representations become increasingly chaotic and incoherent as it attempts to generate an emoji token, cycling through corrupted Unicode fragments and unrelated characters rather than settling on any valid emoji, which explains why LLMs produce garbled emoji spam instead of gracefully acknowledging the emoji doesn't exist like a human would.

Was this useful?