microgpt

2026-03-31

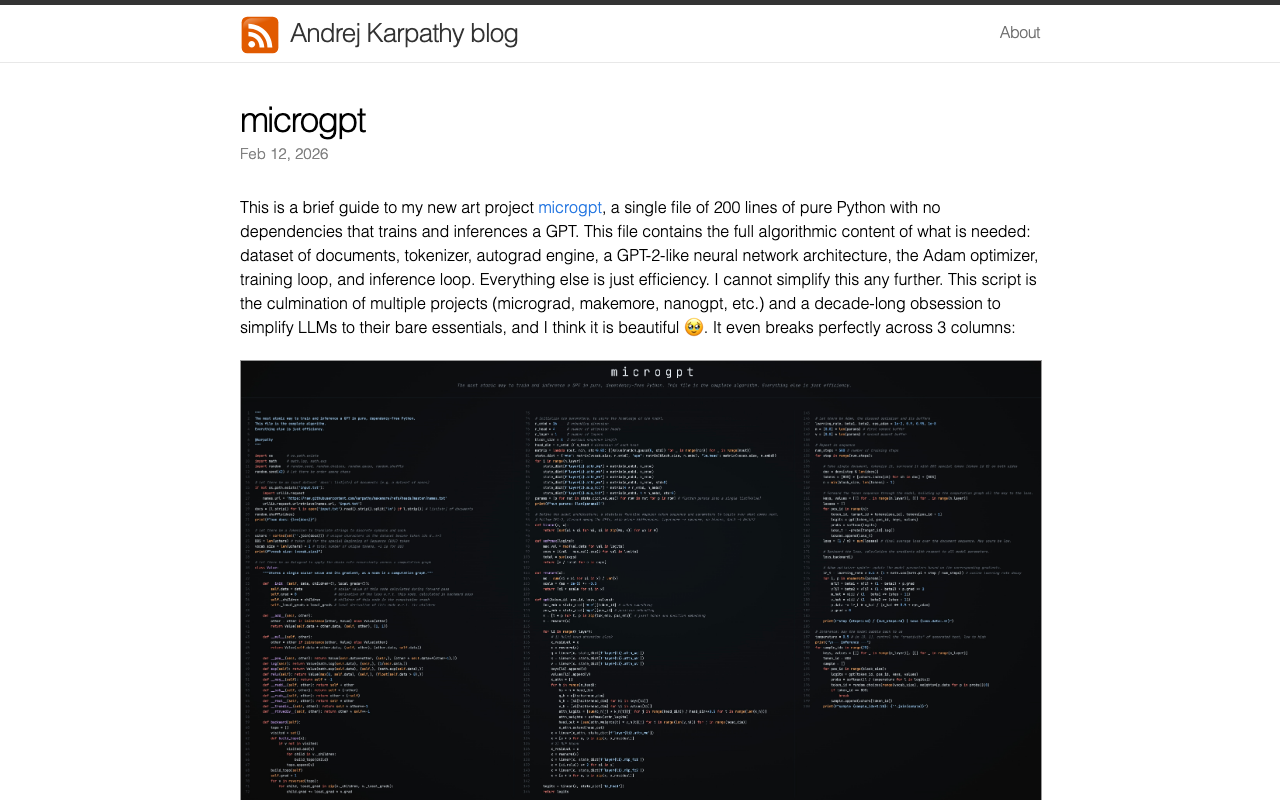

Everything you need to understand a GPT in 200 lines of Python: tokenizer, autograd engine, transformer, Adam optimizer, training and inference. One file, no dependencies. Trains on 32,000 names and shows how text becomes tokens, tokens become patterns, and patterns generate new text. The same mechanism ChatGPT uses, just small enough to read in an afternoon.

Was this useful?