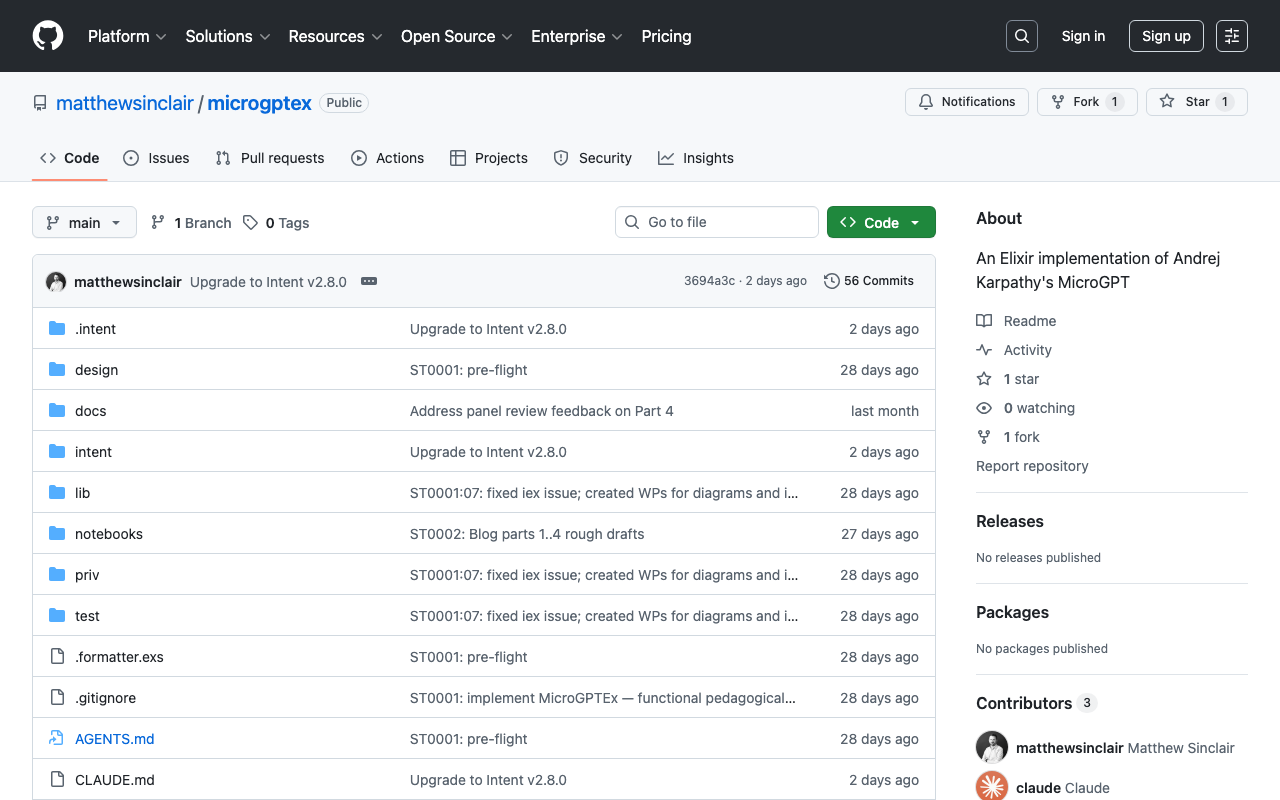

matthewsinclair/microgptex: A pure-functional Elixir version of MicroGPT

2026-03-31

Karpathy's MicroGPT, rewritten in pure functional Elixir. Zero external dependencies. Nine modules covering reverse-mode autograd, multi-head self-attention with KV caching, Adam optimization, and autoregressive text generation. Not for production -- built to make transformer internals readable. Train on character-level datasets (names, etc.) with configurable layers, embedding dims, heads, and learning rate. Run Microgptex.run() in IEx to watch it learn.

Was this useful?