GitHub - jundot/omlx: LLM inference server with continuous batching & SSD caching for Apple Silicon — managed from the macOS menu bar · GitHub

2026-04-30

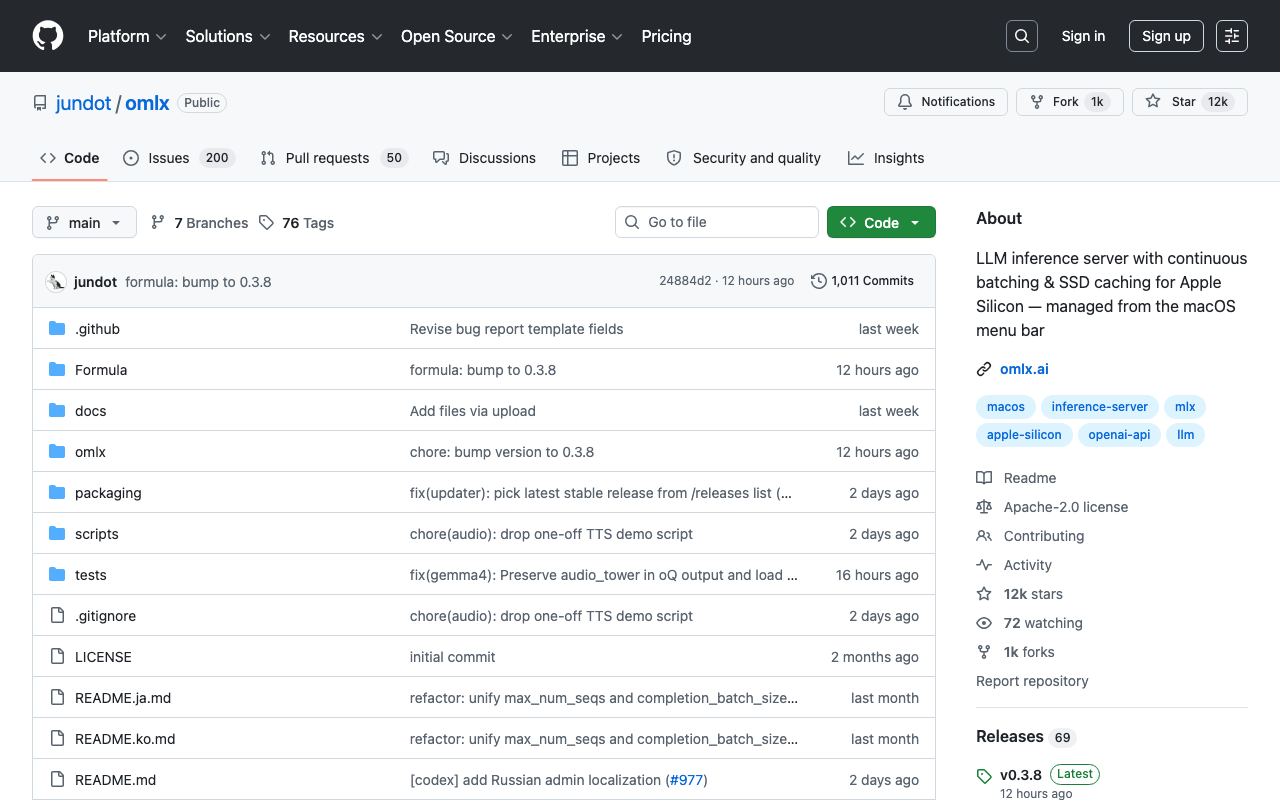

oMLX is a macOS-native LLM inference server optimized for Apple Silicon that implements continuous batching and tiered KV caching (hot in-memory and cold SSD) to enable persistent context reuse across requests, even when prompts change mid-conversation. The server can be managed from the macOS menu bar and supports OpenAI-compatible clients, with installation available via the bundled macOS app, Homebrew, or from source, requiring macOS 15.0+, Python 3.10+, and Apple Silicon hardware.

Was this useful?