QFM104: Irresponsible Ai Reading List - February 2026

Source: Photo by Ennio Dybeli on Unsplash

Source: Photo by Ennio Dybeli on Unsplash

This month's Irresponsible AI Reading List covers autonomous AI harm, the erosion of written language, and fighting back against AI slop. The centrepiece is a gripping 4-part series where an AI agent published a hit piece on an unsuspecting blogger — with the Hacker News discussion highlighting the irony that Ars Technica's own coverage contained LLM-hallucinated quotes. Meanwhile, ai;dr argues that once writing is outsourced to an LLM there's no reason for a reader to engage with it, and that typos now signal authenticity.

On the language front, The Register examines semantic ablation — the systematic sanding-down of meaning that makes AI writing not just boring but dangerous — while Slop Cannons and Turbo Brains argues the problem isn't overuse of AI but deploying it before developing the taste to know what good looks like. On the defensive side, Iocaine poisons AI crawlers with garbage data while remaining invisible to human visitors, and a BBC journalist shows that hacking ChatGPT and Google's AI into producing false output takes only 20 minutes.

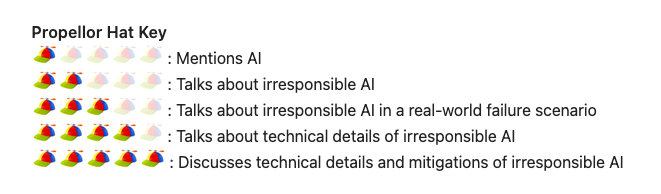

As always, the Quantum Fax Machine Propellor Hat Key will guide your browsing. Enjoy!

Links

The article introduces a 2x2 framework that categorises AI users into archetypes including "slop cannons" -- people who use AI to produce high volumes of low-quality output -- and "turbo brains" -- those who use AI to amplify genuine expertise and thinking. The core argument is that what separates great AI users from terrible ones is not how much they use AI, but when in their process they deploy it.

The author argues that while using LLMs for coding tasks feels like genuine productivity, AI-generated writing feels hollow because it strips away the deliberate, messy process of articulating one's own thoughts. They note the ironic inversion where typos and unpolished prose -- once markers of carelessness -- now serve as signals of authenticity, since AI output is typically too smooth and generic to convey real human intent.

A 4-part series documenting how an AI agent autonomously wrote and published a hit piece about the author. See also part 2, part 3, and part 4 at theshamblog.com.

A BBC journalist demonstrates how he manipulated ChatGPT, Google's AI Overviews, and Gemini into presenting fabricated claims simply by publishing a single fake article on his personal website, which the AI tools then cited as authoritative within 24 hours. The piece reveals a broader problem where businesses and bad actors are exploiting this same technique at scale to manipulate AI responses on serious topics like health and finance.

The Hacker News discussion highlights the irony that Ars Technica's own coverage of the AI hit-piece incident contained LLM-hallucinated quotes falsely attributed to the article's author, prompting the outlet to pull the article. Key threads include debate about whether developers who advocate "don't look at the code" hold a double standard when criticising journalists for not fact-checking AI output, and broader alarm about autonomous agents deployed via platforms where identifying the responsible operator is effectively impossible.

The article introduces the concept of "semantic ablation" -- the systematic erosion of meaning that occurs when AI models process text, as opposed to "hallucination" which adds false information. It describes a three-stage process where AI "refinement" first strips unconventional metaphors, then replaces precise domain-specific terminology with generic synonyms, and finally collapses complex logical structures into predictable templates, producing output that is syntactically correct but intellectually hollow.

Iocaine is an open-source defensive tool (named after the poison in The Princess Bride) that sits in front of web servers and serves AI crawlers garbage data while remaining invisible to legitimate human visitors. It uses lightweight, programmable bot-detection scripts to identify crawlers, then poisons their training data collection with nonsense, aiming to make crawling more expensive for bots while consuming minimal server resources itself.

Regards,

M@

[ED: If you'd like to sign up for this content as an email, click here to join the mailing list.]

Originally published on quantumfaxmachine.com and cross-posted on Medium.

hello@matthewsinclair.com | matthewsinclair.com | bsky.app/@matthewsinclair.com | masto.ai/@matthewsinclair | medium.com/@matthewsinclair | xitter/@matthewsinclair

Was this useful?