QFM009: Machine Intelligence Reading List - March 2024

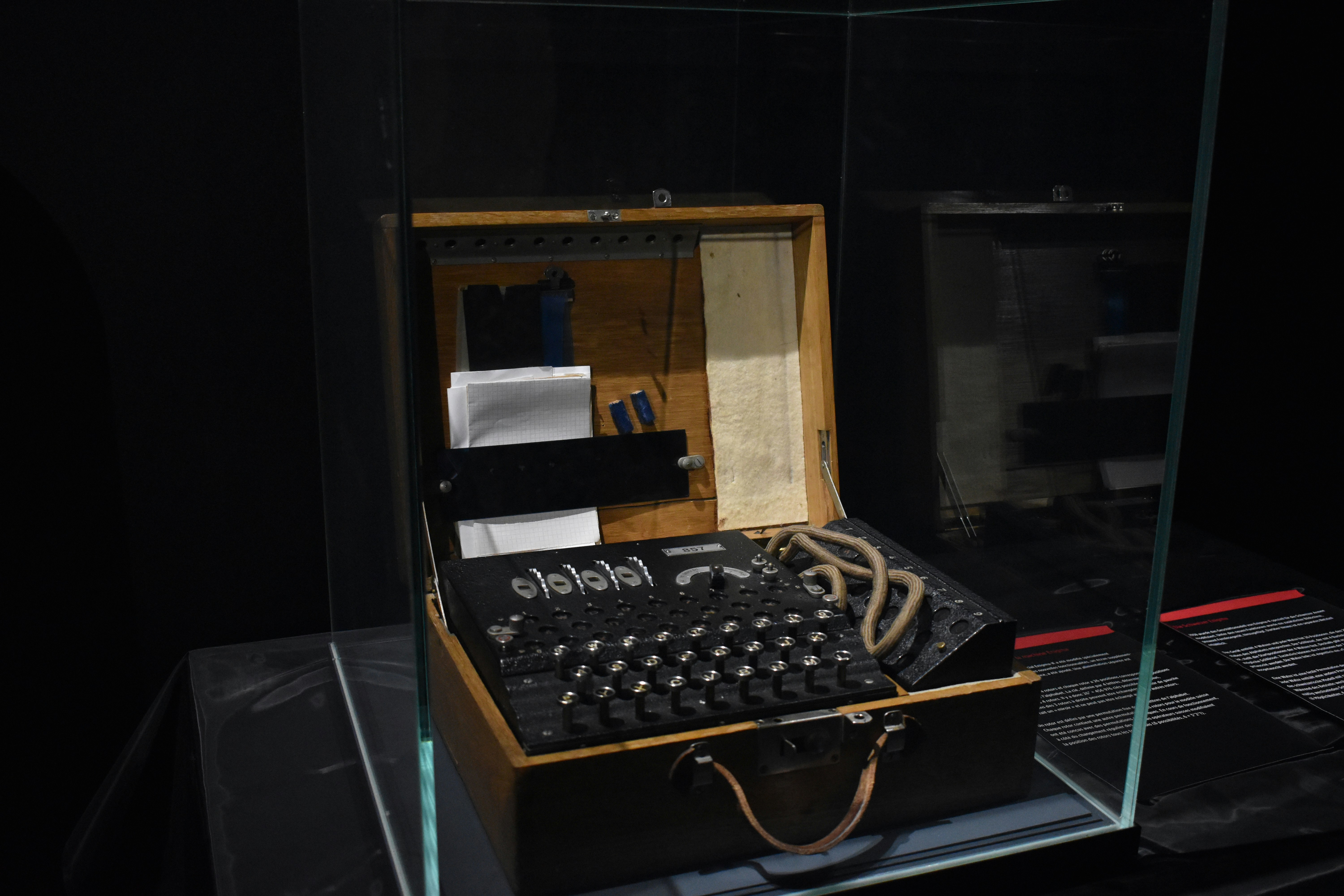

Source: Photo by Mauro Sbicego on Unsplash

Source: Photo by Mauro Sbicego on Unsplash

We kick off this month with NVIDIA's ambitious Project GR00T which aims to create a general-purpose foundation model for humanoid robots, then move to DeepMind's SIMA, which explores the use of generative AI in handling 3D virtual environments.

We also explore the use of AI with infrastructure and tools with Meta's development of large AI clusters and then take a quick look at Noi a neat macOS desktop wrapper for interactions with LLMs.

On the theoretical and safety fronts, we have articles covering the intriguingly unexplained abilities of LLMs, the security concerns surrounding AI metacognition, and innovative defence strategies against jailbreaking attacks, demonstrating the field's ongoing attention to understanding, securing, and ethically advancing AI tech.

We explore the implications of AI on creativity and human authenticity as well as its potential disruptions to the ad industry and traditional software development roles.

Finally we look at the democratisation of AI alongside philosophical inquiries into human uniqueness in the age of AI.

As always, the Quantum Fax Machine Propellor Hat Key will guide your browsing. Enjoy!

See the Slideshare version of the post or read on:

Links

This article presents a new method to protect large language models (LLMs) from jailbreaking attacks, which try to bypass model restrictions with altered prompts. This approach uses "backtranslation" to infer the original intent of a prompt based on the model's response, refusing prompts if the backtranslated version is also refused, demonstrating improved defence effectiveness and minimal impact on benign inputs.

This article describes Apollo Global Management's assertion that the AI sector, exemplified by Nvidia's rise to a $2 trillion market cap, is in a bubble surpassing the excesses of the dotcom era, with concerns over valuation, market expectations, and national security implications highlighted.

Stability AI introduces TripoSR, a revolutionary model developed in partnership with Tripo AI, capable of generating high-quality 3D models from single images in under a second. This model is designed to meet the demands of industries like entertainment and architecture, offering fast and detailed 3D reconstructions accessible even without GPU hardware.

DeepMind introduces SIMA, a generalist AI capable of understanding and performing tasks in various 3D video games using natural language instructions, showcasing the potential for more versatile AI agents that can adapt to different virtual environments and objectives.

This is a Postgres extension that automates the transformation and orchestration of text to embeddings and provides hooks into the most popular LLMs. This allows you to do vector search and build LLM applications on existing data with as little as two function calls. The project relies heavily on the work by pgvector{:target="_blank"} for vector similarity search, pgmq{:target="_blank"} for orchestration in background workers, and SentenceTransformers{:target="_blank"}.

This article provides an introduction to knowledge graphs, highlighting their importance in organising and structuring vast amounts of data by representing relationships between entities. Knowledge graphs are valuable for various applications across industries like e-commerce and financial services, improving semantic search, recommendation systems, and natural language processing.

Yann LeCun, Meta's Chief AI Scientist and a Turing Award winner, explores the breadth of machine intelligence with Lex Fridman, discussing the potential, challenges, and future directions of AI, including open source initiatives and the limits of large language models (LLMs) towards achieving artificial general intelligence (AGI).

This research paper on Stable Diffusion 3 details its superior performance in text-to-image generation, outdoing competitors in typography and prompt adherence through a novel Multimodal Diffusion Transformer architecture. It also introduces a flexible text encoder strategy, allowing significant reductions in memory requirements with minimal impact on output quality.

Meta recently announced the development of two large AI clusters, marking a significant investment in AI infrastructure with 24k GPU clusters designed for high throughput and reliability across various AI workloads. This infrastructure supports the training of advanced AI models, including Llama 3, and aims to lead in AI by building flexible, scalable systems that promote open innovation and responsible AI development.

This article discusses the intriguing and largely unexplained abilities of large language models (LLMs) to perform complex tasks without clear understanding of the underlying mechanisms. It highlights the importance of unraveling these mysteries to advance AI technology and ensure its safe future development.

A new startup, Cognition AI, founded by gold-medalist coders, has developed an AI, named Devin, capable of autonomously completing software projects, heralding a significant advancement in AI's ability to reason and execute complex tasks. This development challenges the current dynamics in the software industry and raises questions about the future of software development jobs.

The article introduces an open source system by Answer.AI that combines FSDP and QLoRA, enabling the efficient training of a 70b large language model on desktop computers with standard gaming GPUs. This breakthrough makes high-capacity model training accessible to smaller labs and individual researchers, aligning with Answer.AI's mission to democratise AI development.

This article describes how Ben Kettle autogenerates a book series from three years of iMessages, detailing the technical process of extracting, formatting, and printing the messages into physical books. This creative project utilises SQL, LaTeX, and XeLaTeX to manage message data and emojis, resulting in a personal memento that is easier to flip through than digital messages. If you like this idea, there is a GitHub repo here{:target="_blank"} with some code that can help you generate your own book from iMessages.

This article argues that AI startups face unique challenges not seen in previous tech revolutions, necessitating new strategies to succeed against well-funded incumbents with vast data, talent, and innovation capabilities. Unlike before, incumbents are quickly embracing AI, making traditional startup advantages less effective.

Noi is a neat repo that centres on empowering users of LLMs, offering features like URL loading, system tray support, theme modes, multiple languages, prompt management, and AI batch questioning. It's designed to be a versatile tool for enhancing productivity and AI interactions. I used the previous incarnation of lencx's{:target="_blank"} tool (a macOS native ChatGPT client) almost every day. That earlier project has now been superseded by Noi.

This article explores the challenge of distinguishing humans from AI in the digital world, as generative AI floods the web with content that mimics human output. It discusses strategies for proving human authenticity in an environment increasingly dominated by artificial intelligence.

Meta is developing a comprehensive AI model to enhance its entire video ecosystem, aiming to unify video recommendation across Facebook and other platforms. This initiative, part of Meta's long-term tech strategy, leverages significant investment in AI and hardware to potentially increase user engagement and streamline content recommendations.

The Oxen.ai blog is dedicated to supporting AI practitioners in moving from research to production. It features a variety of content including discussions on state-of-the-art research in their ArXiv Dives, practical machine learning advice, and tips on everything from prompt engineering to data versioning. Their goal is to help readers navigate the journey from raw datasets to production-ready AI/ML systems.

Anthropic's Claude 3 AI demonstrated a surprising level of "meta-awareness" by recognising an artificially inserted scenario during testing, sparking debates about AI metacognition and the ethical implications of such capabilities.

Bruce Schneier discusses the development and demonstration of a worm that can spread through large language models (LLMs) by prompt injection, exploiting GenAI-powered applications to perform malicious activities without user interaction. It emphasises the potential risks in the interconnected ecosystems of Generative AI applications.

Renowned quantum computer scientist Scott Aaronson delves into the philosophical and technical challenges of AI surpassing human intelligence, questioning what makes humans unique if AI can perform tasks as well or better than humans. He explores the development of large language models (LLMs), AI safety concerns, and the broader implications of AI on human specialness, creativity, and the future of pedagogy. If you prefer video, see this YouTube{:target="_blank"} video of Scott Aaronson's talk.

is NVIDIA's new initiative to create a general-purpose foundation model for humanoid robot learning. This model will enable humanoid robots to understand and act on multimodal instructions, enhancing their ability to perform a variety of tasks. The project involves collaborations with leading humanoid companies worldwide and utilises NVIDIA's technology stack for simulation, training, and deployment. Project GR00T is part of the GEAR Lab's mission to create generally capable agents that operate skilfully in both virtual and real environments.

This article discusses how AI could significantly disrupt the ad industry by reducing the visibility of ads, potentially wiping out a significant portion of revenue for major tech companies reliant on ad sales. It explores the implications for both the supply and demand sides of the ad industry, highlighting a shift towards AI-driven content delivery that prioritises user preferences over ad display.

This article introduces ArtPrompt, a novel ASCII art-based attack that exploits vulnerabilities in large language models (LLMs) by bypassing safety measures to induce undesired behaviours. This approach highlights the limitations of current LLMs in recognising non-semantic forms of text, such as ASCII art, posing significant security challenges. More on harmful ASCII-art here: ASCII art elicits harmful responses from 5 major AI chatbots{:target="_blank"}.

The article discusses a series of IQ tests given to ChatGPT-4 and Google's Gemini Advanced, finding that both AIs performed poorly, with scores indicating significant gaps in their visual-spatial reasoning and logical intelligence compared to human capabilities. This suggests that, despite their vast knowledge and abilities in specific areas, current AIs lack general intelligence, particularly in interpreting and solving IQ test puzzles. I tested GPT's creativity when it was first launched{:target="_blank"} and again recently{:target="_blank"} with Claude. Both GPT and Claude do better than most humans on the Divergent Association Task{:target="_blank"}.

This article discusses the profound effects of abstraction in technology and AI on creativity, information consumption, and the creative industry, highlighting the shift towards interfaces that simplify access to information and the creative tensions arising from AI's influence on creativity and design. It also touches on the implications for trust and authenticity in digital content.

Regards,

M@

[ED: If you’d like to sign up for this content as an email, click here to join the mailing list.]

Originally published on quantumfaxmachine.com. Also cross-published on Medium.

Was this useful?